From a young age, our lives are filled with assessments: standardized tests, driving exams, placement tests, and, if a business or government agency tests the English or foreign language skills of potential hires and current staff, there is a good chance that they use ALTA language testing. Creating a valid assessment of an individual’s language skill is an important and difficult task: one that requires us to take many steps to guarantee that every test we administer meets the Standards for Educational and Psychological Testing.

So, what does it mean for a test to be valid?

In psychometric terms, a test’s validity is the degree to which the theory behind the test and the interpretation of the test’s score accurately measure the test’s intended purpose. In other words, a valid language test works to assess language ability, and the scores can be defended.

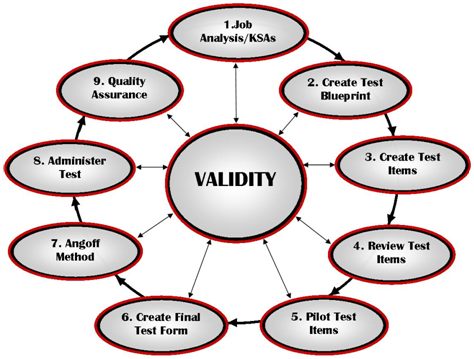

ALTA spends a lot of time and resources to ensure that our language tests are valid. While the process is complex, here is a basic synopsis of 9 important steps we take, whereby each step contributes to the overall validity of a language assessment. Figure 1 illustrates this validation cycle, and each step is described below:

Figure 1: Validation Cycle

1. Job Analysis/KSAs:

The first step in test development is to identify the knowledge, skills, and abilities (KSAs) that the test will be designed to measure. For tests that are designed to qualify an individual to perform a specific job, these KSAs are identified through the performance of a job study in which individuals knowledgeable of what the job entails – or, subject-matter experts (SMEs) – are interviewed to collect this information. The identification of KSAs is a crucial step in providing focus to the development efforts that follow.

2. Create a Test Blueprint:

The test blueprint is created based on the KSAs identified, and their relative importance to the job. The blueprint specifies to the test developer the content that will be included in the test, the amount of content in each skill area, and any other instructions needed to properly develop the content. Using the blueprint as a guide, test developers are engaged to create the actual test items.

3. Create Test Items:

Item development is carried out according to the specifications outlined in the test blueprint. More than the ample amount of test items are created to allow for the possibility that some of the items will need to be eliminated based on pilot testing and item analysis results.

4. Review Test Items:

All test items are submitted to a separate panel for review and comment. This panel reviews each test item and verifies that each aligns with the specifications as outlined in the test blueprint. Any need for modification is recorded, and comments are provided to the developers so that the appropriate changes can be made. This review process is repeated for any changes that are made until the pilot version of the test is complete.

5. Pilot Test Items:

Once the final draft version has been reviewed and approved by test developers and the review panel, the items are pilot-tested to gather data around item performance. Pilot testing is done using a sample of candidates representative of the target population. Following the pilot-testing, psychometric analysis is performed on the results to determine the test’s performance.

6. Create Final Test Form:

Results from the statistical analysis yield the items that will constitute the final test form, and these items assembled into the operational version of the test.

7. Angoff Method:

Using the final test versions, an Angoff panel is assembled to determine the cut score of the test, or the percentage of correctly-answered items that the candidate needs to successfully pass the test. Although various standard-setting methods exist, ALTA typically uses the Angoff method, which relies on the judgments of the panel as to the percentage of minimally-qualified candidates who would perform successfully on each item.

8. Administer Test:

Upon determining the cut scores for the final test versions, the tests are available for operational use and are administered according to the operational policies set up by the test administrator using a prescribed scoring rubric.

9. Quality Assurance:

Quality assurance is performed continuously to ensure that the items are performing properly over time. Quality assurance also provides a method for monitoring overexposure and identifying items that may have been compromised.

It is important to note that validation is a cycle, and the testing organization should continue reviewing the test and collecting evidence of the test’s validity. At various points in the lifetime of a test, each step may be revisited for review and/or revision.

____________________________________________________________________________________________

ALTA is a leader in language testing and large-scale language solutions for government agencies and corporations nationwide. In addition to being the official language testing provider for the cities of Los Angeles and New York, ALTA works with many of the country’s largest corporate organizations, from DELTA Airlines to Wells Fargo. Learn more about us at altalang.com